Documentation Index

Fetch the complete documentation index at: https://docs.getjumper.io/llms.txt

Use this file to discover all available pages before exploring further.

Overview

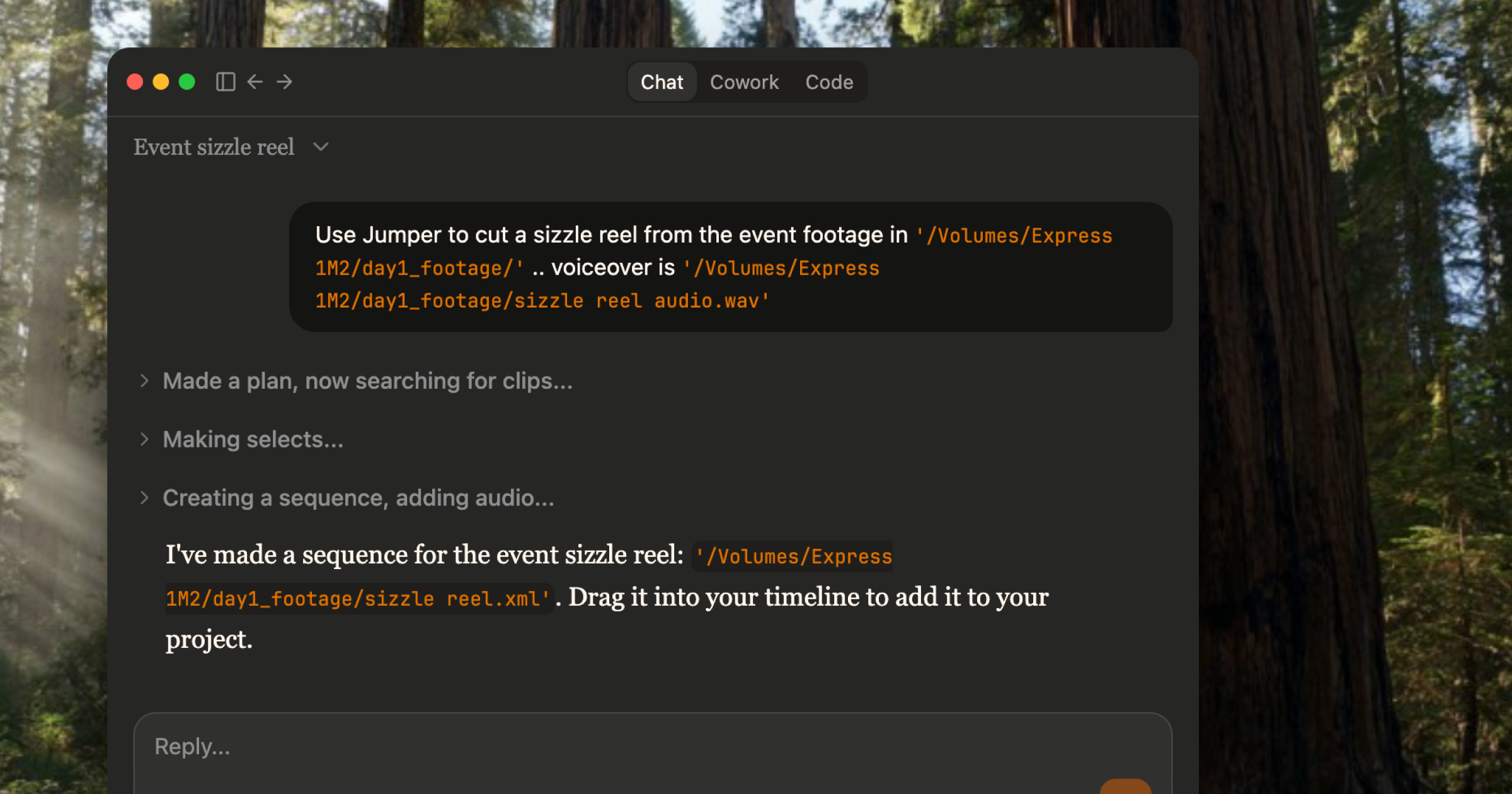

Agentic editing lets AI agents operate Jumper directly (searching your footage, retrieving clips, and triggering workflow actions) through an server. Jumper integrates with Claude Code Desktop, Claude Code CLI, OpenAI Codex Desktop, and LM Studio, so you can give a natural-language task to an agent and have it break the work into steps and execute them. Instead of clicking through searches and exports yourself, you describe what you want in plain language. The agent queries Jumper’s backend, orchestrates the workflow, and produces the result (clips, sequences, exports) without you having to perform each step manually.What agents can do

Same as you

Agents can use Jumper much like you do through the interface:- Search visually across analyzed footage using natural language

- Search transcriptions for spoken words and phrases

- Retrieve clip segments with start and end times

- Find similar clips by text, image, or frame

- Find clips by face recognition (people search)

- Trigger workflow actions such as exporting a sequence to Premiere Pro, Final Cut Pro, DaVinci Resolve, or Avid Media Composer

Beyond the UI

Because the agent orchestrates Jumper programmatically, it can also do things the normal interface does not:- Export scenes as individual files to a folder

- Export a set of clips as a sequence for your editing software (Premiere XML, FCPXML, etc.)

Orchestration

The agent acts as the orchestrator: you give it a complex task, and it breaks it down into smaller steps and runs them in the right order. The agent decides how to search, filter, and export, then executes the workflow end to end.Example use cases

Agentic editing can speed up routine media production tasks:- Finding B-roll that matches a script

- Pulling every clip of a certain person across a large library

- Creating sequences of selects for review or rough cuts

- VO + B-roll workflows (e.g. sizzle reels from event footage with a voiceover track)

Parallel workflows

Jumper can server multiple agents sessions at the same time. As each agent can work on different tasks, you can fire off several jobs in parallel and focus on other work while they complete.Skills in Agentic Editing

Skills are how you encode repeatable editing workflows for the agent. Each skill is aSKILL.md file that defines when the workflow should be used, what steps it runs, and how results should be returned.

Why skills matter

- Predictable behavior — Same workflow runs consistently across sessions and across editors.

- Shorter prompts — Process logic lives in reusable instructions instead of long conversation context.

- Standardized quality — Teams can enforce naming conventions, export formats, and review checks.

- Specialized workflows — Complex or domain-specific steps stay out of the general conversation loop.

What you can customize

- Modify shipped skills — Tune existing workflows for your editorial style (clip length bias, pacing, selection thresholds, export defaults).

- Add new skills — Create workflows for jobs your team repeats often (e.g., social cutdowns, b-roll sequences, interview selects).

Compatibility

Jumper is currently compatible with:- Claude Code Desktop

- Claude Code CLI

- OpenAI Codex Desktop

- LM Studio

On Privacy

Will my footage be uploaded to AI companies? No. Here’s how it works: the agent (Claude, Codex, etc.) talks to Jumper via MCP (an open standard for AI agents to “talk” with software). It sends commands like “search for shots of Anna smiling” or “export clips to this folder.” Jumper runs a local server on your machine (localhost). All the heavy work (visual search, transcription, face recognition) happens on your computer. Your footage never leaves it. What the agent receives back is metadata only: file paths, timecodes, transcript excerpts, search result lists. The agent orchestrates the workflow; Jumper does the actual analysis locally and returns pointers to where things are, not the footage itself. For a detailed breakdown of what data the agent receives and how model providers handle it, see Agentic editing and data privacy.Related

Your first agentic editing export

Step-by-step walkthrough of searching and exporting clips via an AI agent

Settings: AI Agent

Configure and connect Claude or Codex to Jumper