This guide walks through a fully local agentic editing setup with Jumper using LM Studio. The LLM agent runs on your machine, there is no dependency on cloud AI services, and you do not need a paid model subscription to use it. LM Studio gives you access to many local models. For Jumper, choose a model with Tool Use and Vision support. The Gemma 4 and Qwen 3.5 model families are good options. This guide uses a Gemma 4 model. For air-gapped networks or absolute privacy requirements, see Agentic editing and data privacy. Once LM Studio and your model are installed, this workflow can run fully locally without an internet connection.Documentation Index

Fetch the complete documentation index at: https://docs.getjumper.io/llms.txt

Use this file to discover all available pages before exploring further.

Before you begin

- Jumper is installed and running locally

- You have media analyzed in Jumper if you want to test a real search right away

- You want the model and Jumper tool calls to stay on your machine

Step-by-step

Download LM Studio

Go to lmstudio.ai and download the build for your operating system.

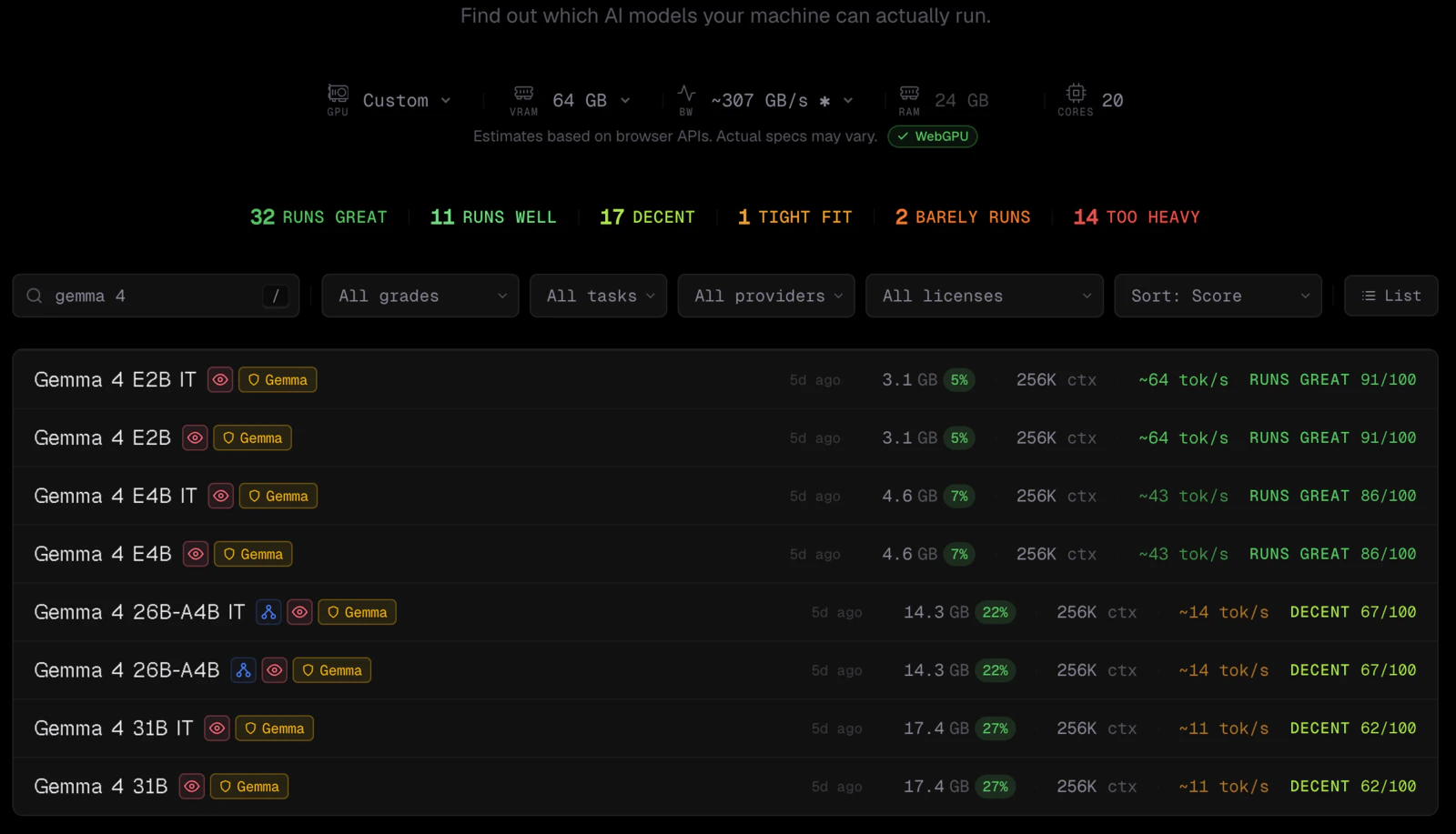

Check which Gemma size fits your machine

Before downloading a large model, search for

gemma 4 on canirun.ai and see which sizes are realistic for your hardware.The little red eye icon marks models with Vision support, which is required for the Jumper workflow.

Open LM Studio's model search

Open LM Studio and click the robot icon in the left sidebar (Model Search).

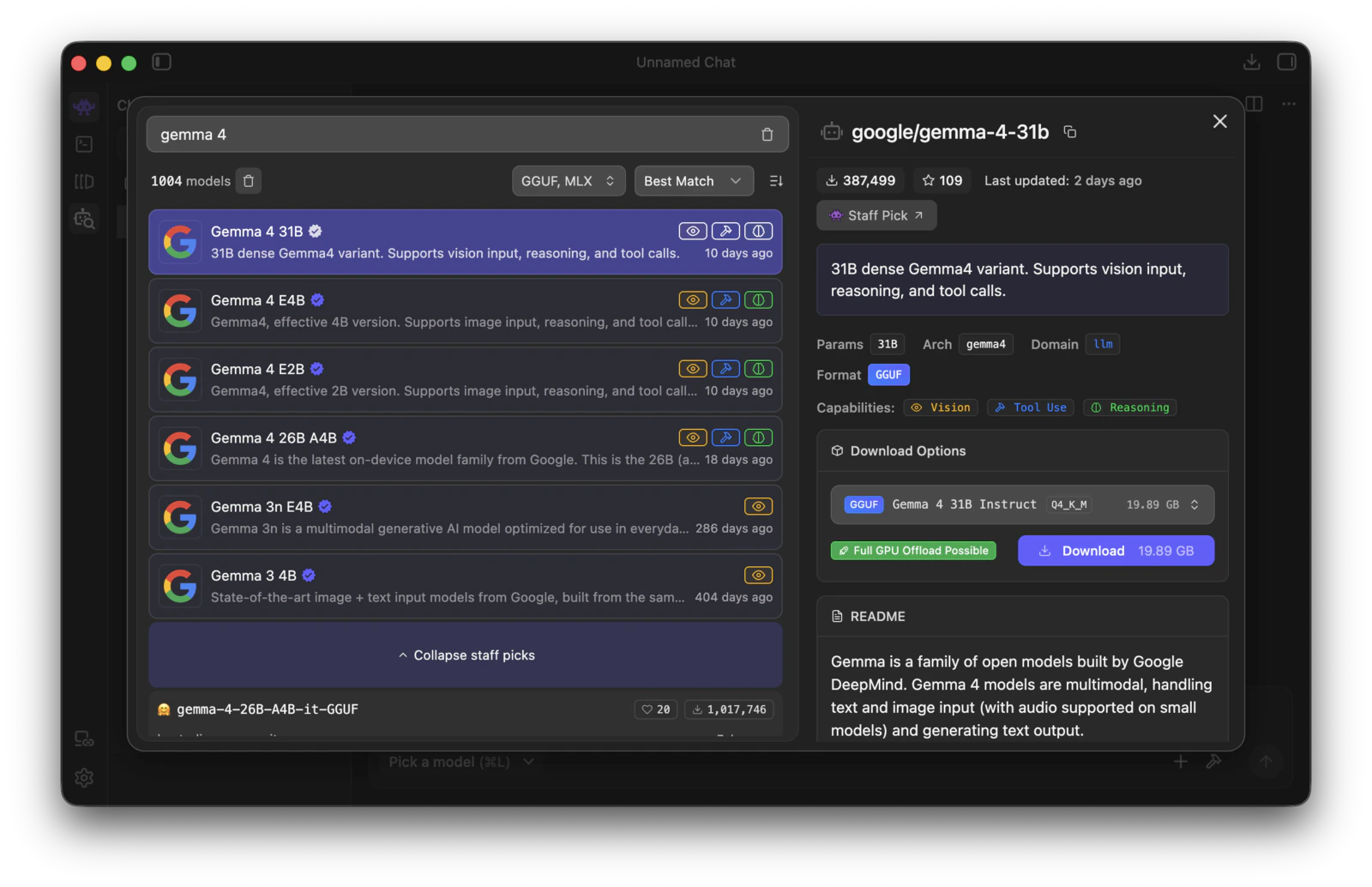

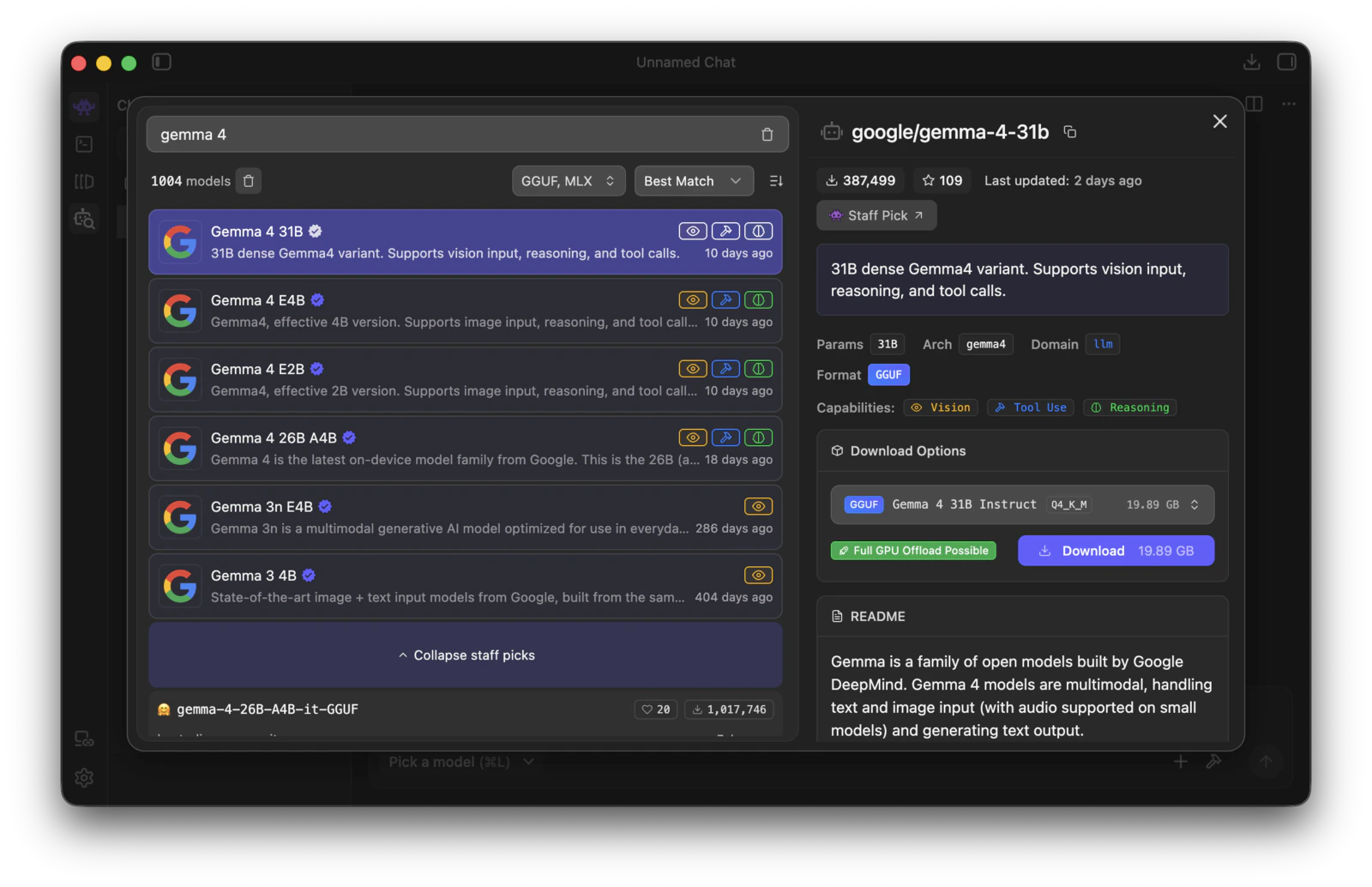

Search for a Gemma 4 model and download it

Search for

gemma 4 and pick a model that shows the capabilities you want. For Jumper, Tool Use and Vision are the key capabilities.

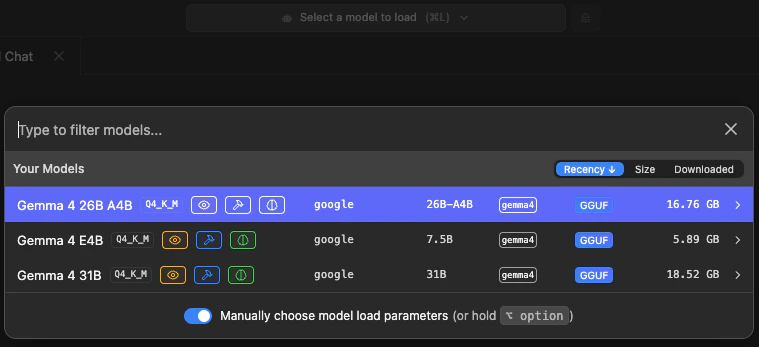

Choose load parameters

Once the download finishes, open the model picker, turn on Manually choose model load parameters, and then click the model you want to load.

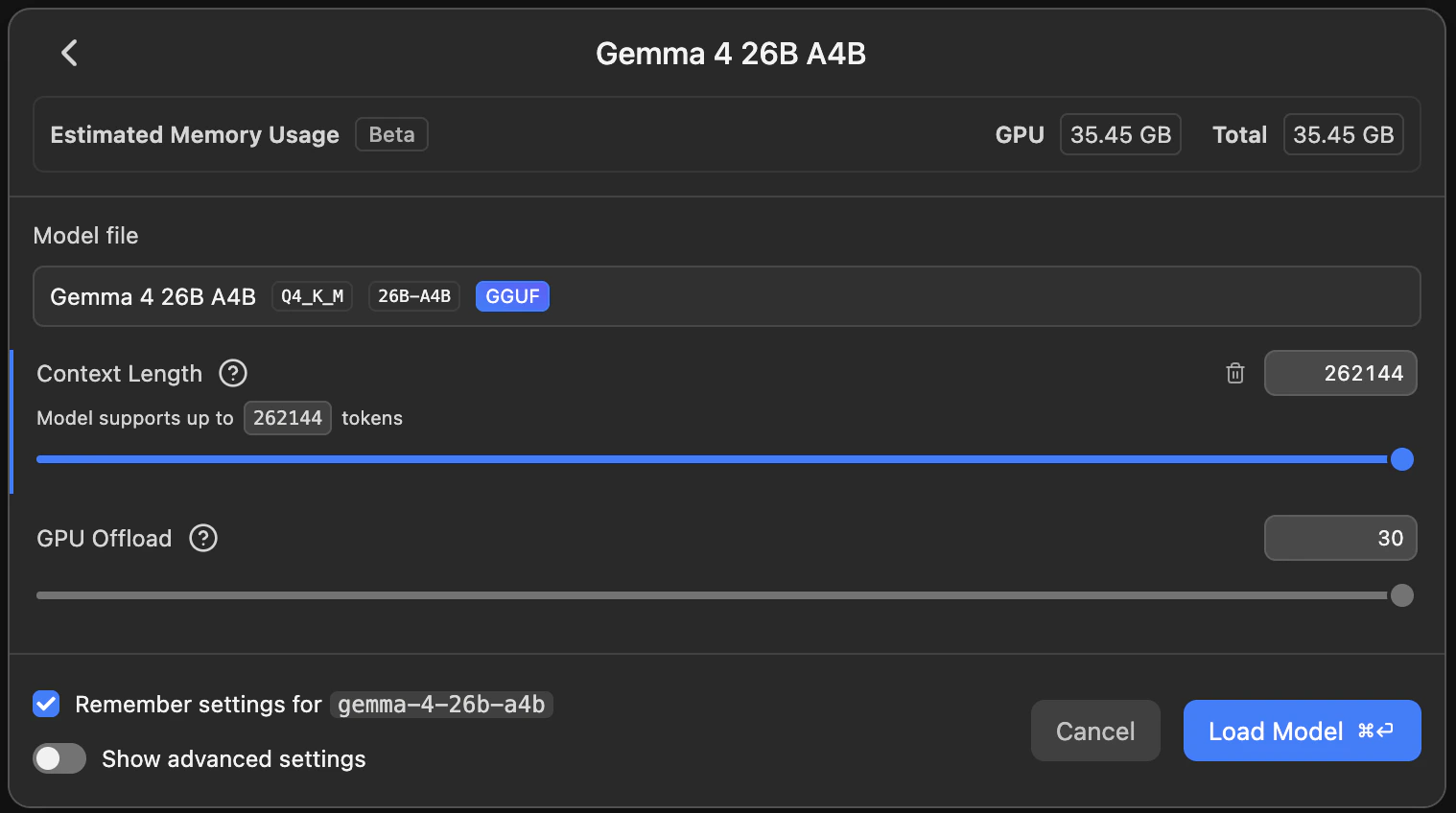

Confirm the load settings

In the load settings view, you will most likely want to increase Context Length. In this example it is set to the maximum value, and Remember settings is enabled so you do not have to repeat this every time you load the model.

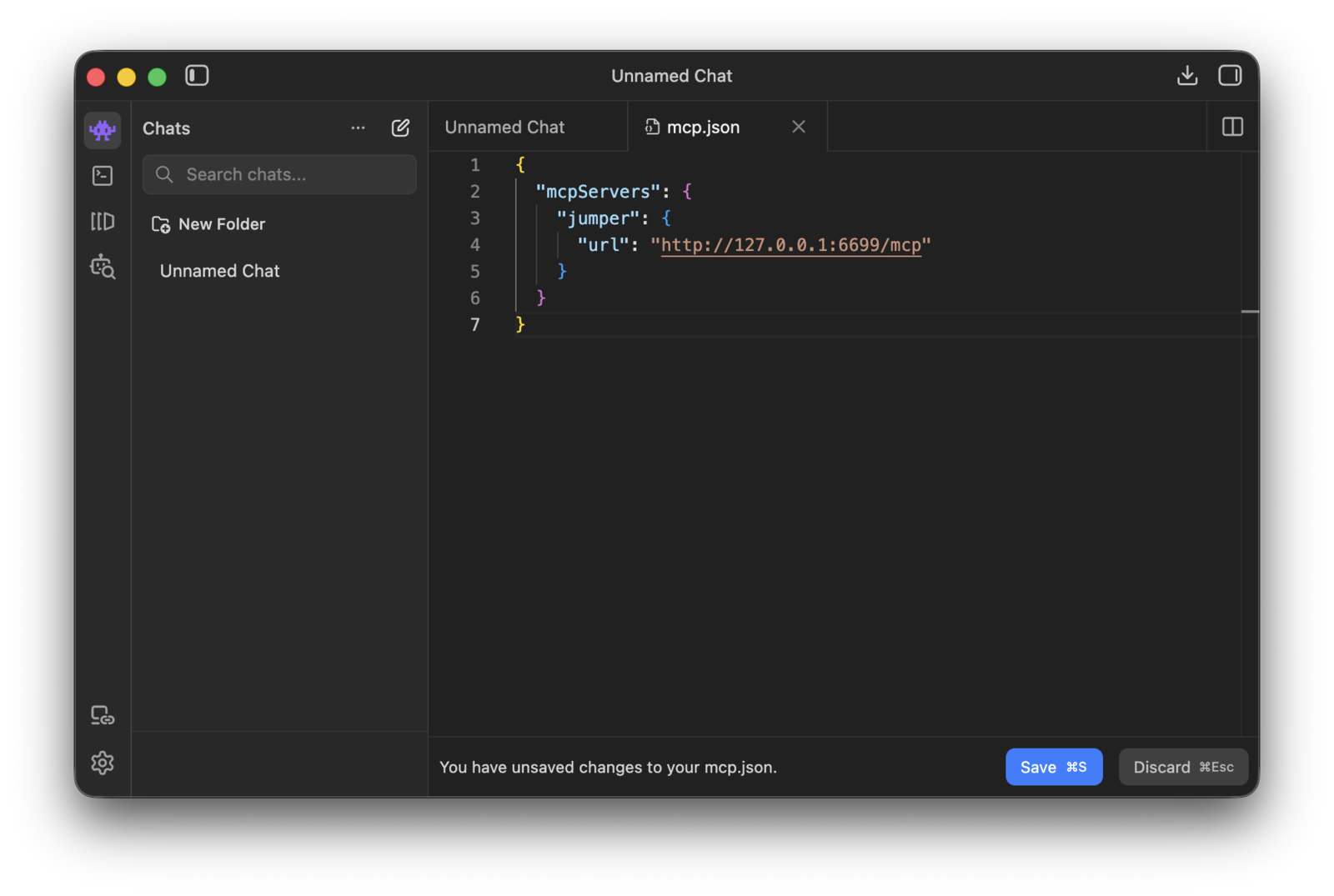

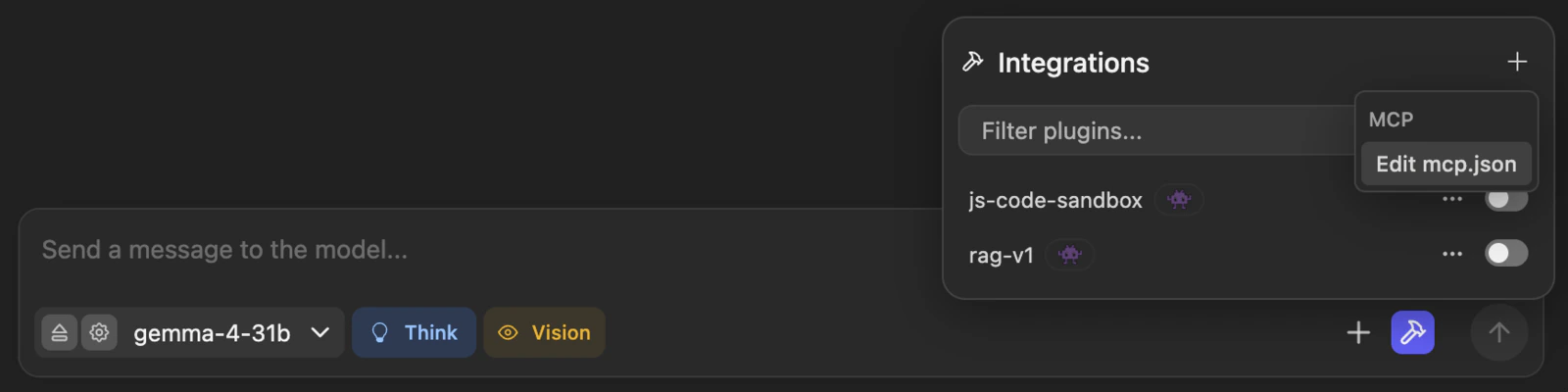

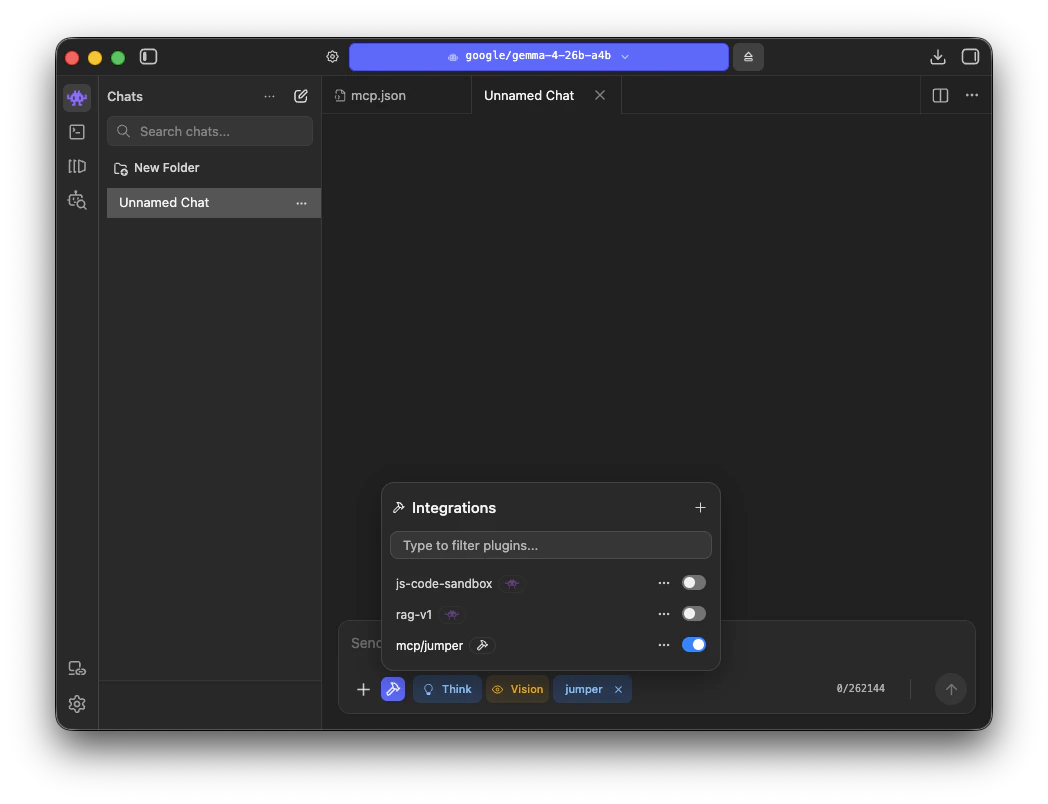

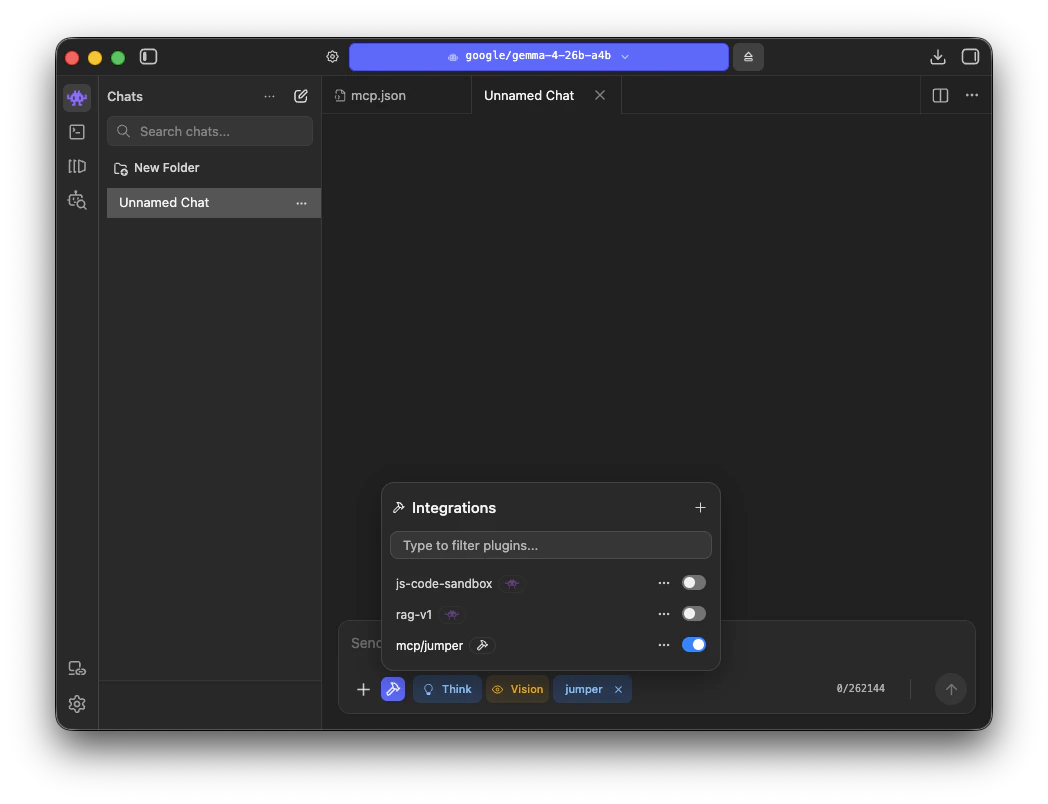

Open the MCP configuration

In LM Studio chat, open Integrations, click the

+ menu, and choose Edit mcp.json.

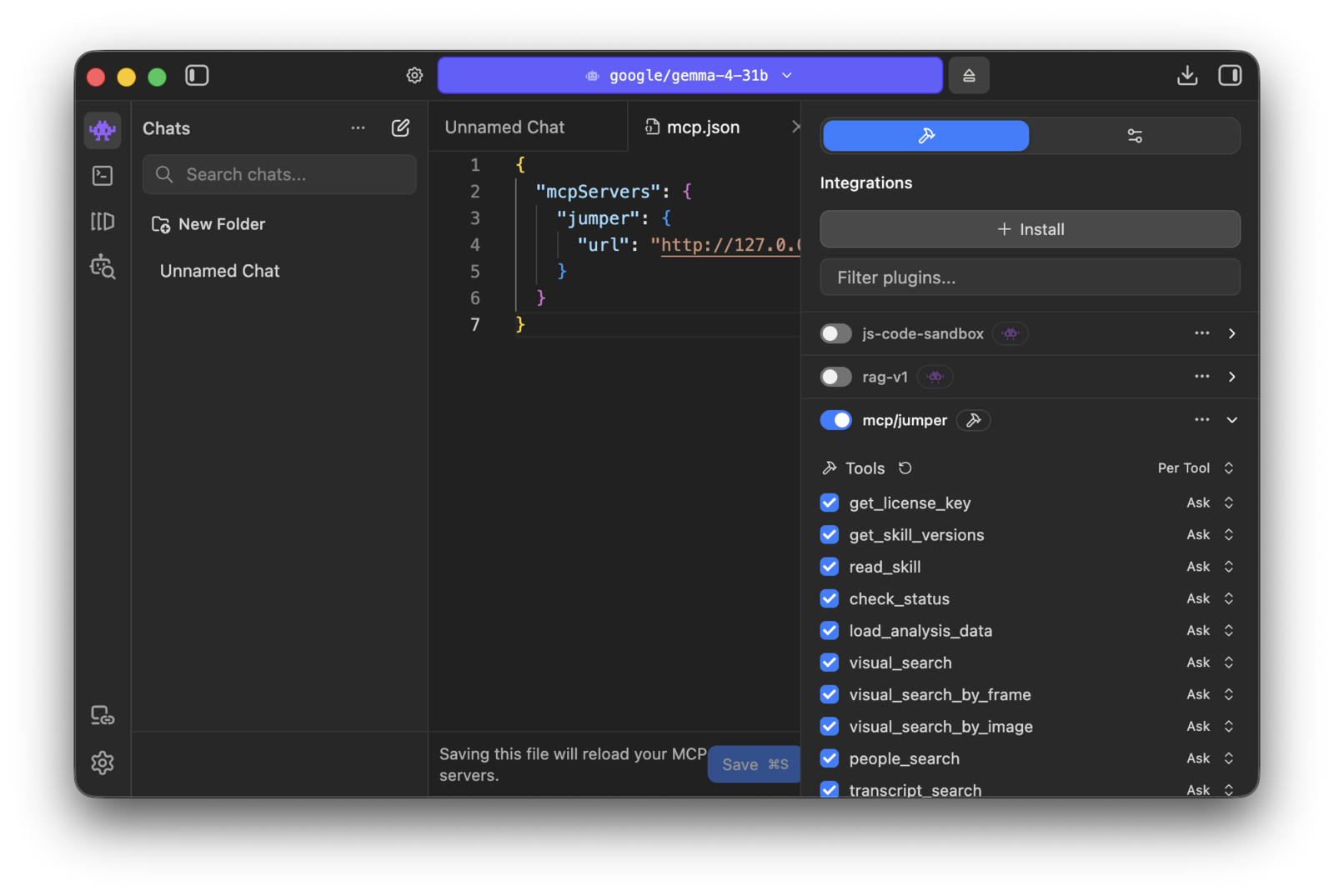

Enable the Jumper integration

After saving, LM Studio reloads its MCP servers. Open Integrations and turn on

mcp/jumper.

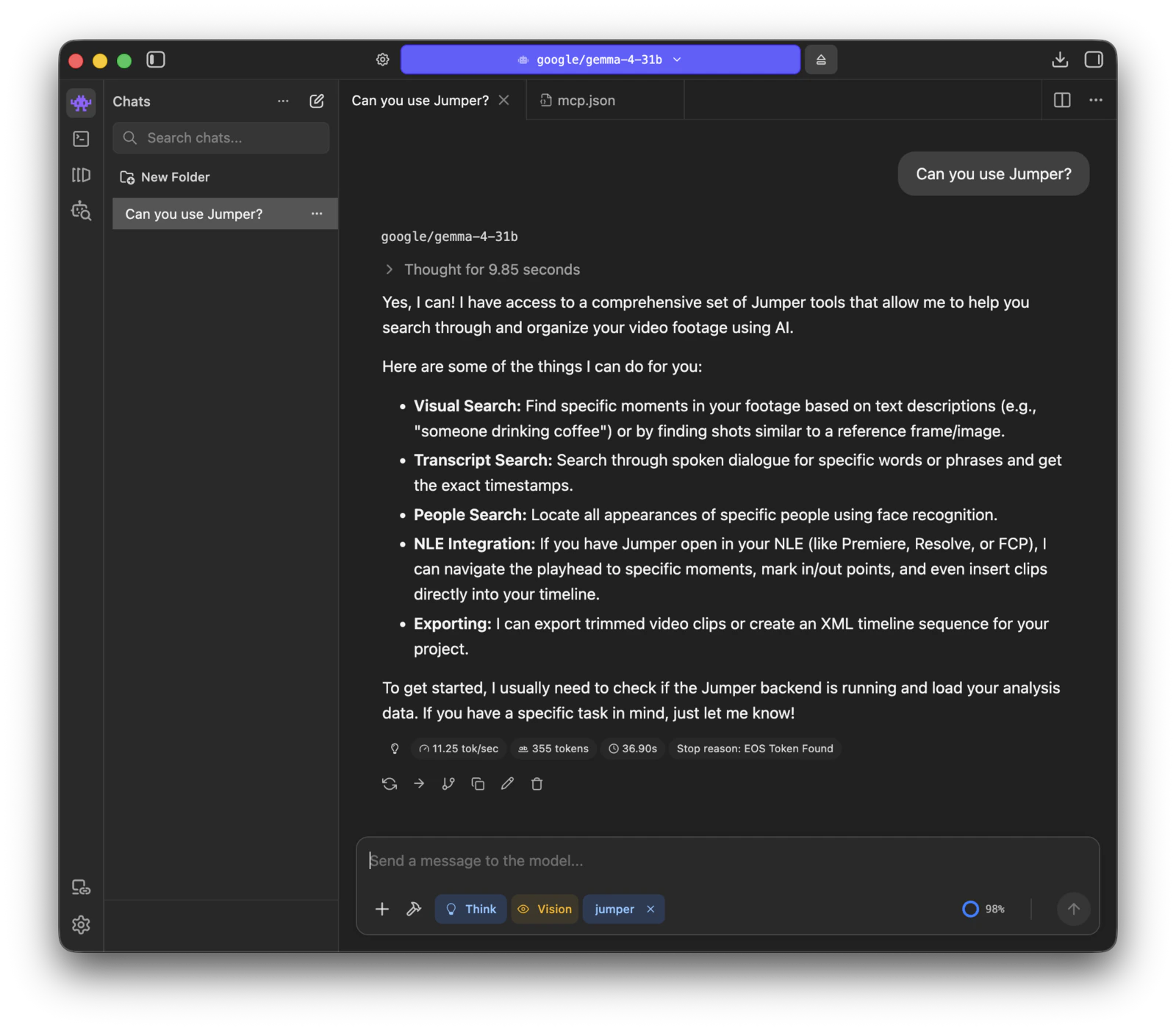

Make sure Jumper is active in the chat

Open or start a chat and confirm that

jumper is attached in the composer before you send a prompt.

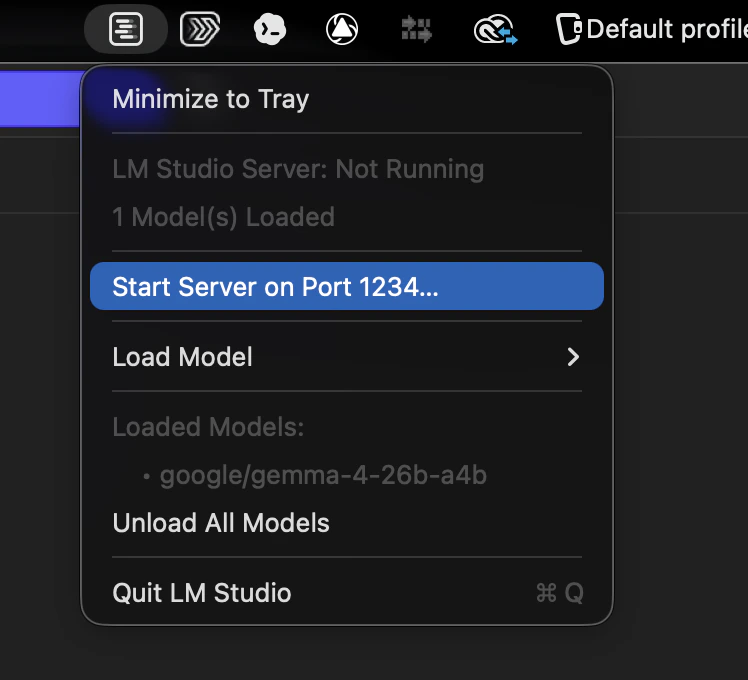

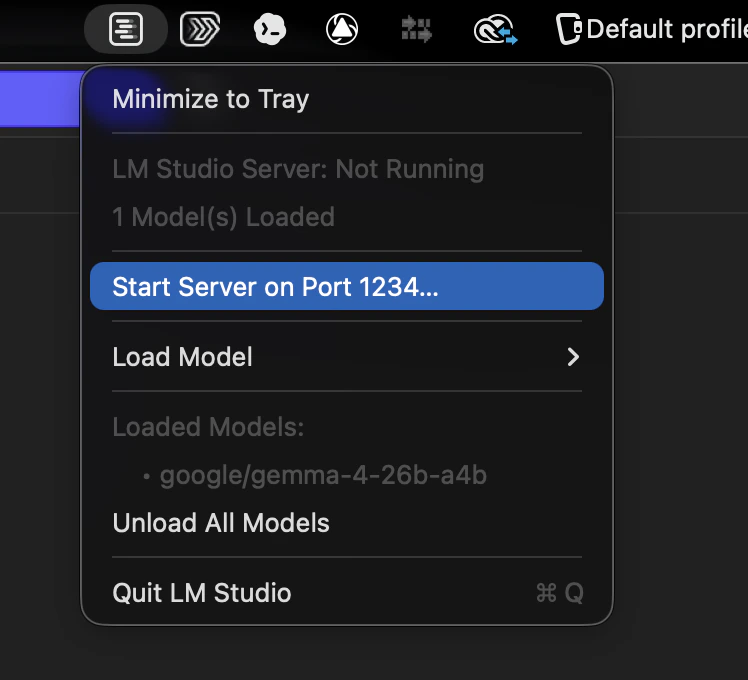

Start LM Studio's local server

Open the menu bar icon and choose Start Server on Port 1234.

Troubleshooting

mcp/jumperdoes not appear in Integrations: Check that yourmcp.jsonis valid JSON and that Jumper is running locally onhttp://127.0.0.1:6699.- The model behaves like it has no tools: Make sure

mcp/jumperis enabled in Integrations and thatjumperis attached in the current chat composer. - The agent can search but does a poor job judging which shots are the best matches: Make sure LM Studio’s local server is running on

http://127.0.0.1:1234. - The model is too slow or does not load: Choose a smaller Gemma 4 variant or lower the context length in LM Studio’s load settings.

Related

Agentic editing

Overview of how AI agents use Jumper through MCP

Agentic editing and data privacy

What stays local and what the model actually receives